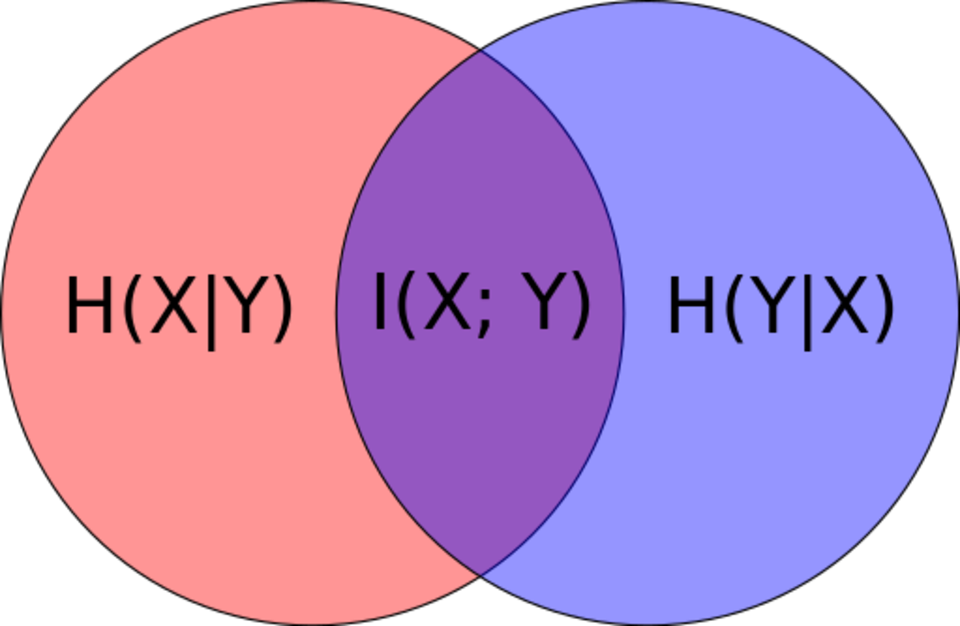

Entropy is measured between 0 and 1.(Depending on the number of classes in your dataset, entropy can be greater than 1 but it means the same thing, a very high level of disorder. De nition The conditional entropy of X given. The main difference from your approach is, that the expected value is taken over the whole X × Y X × Y domain (taking the probability pdata(x, y) p d a t a ( x, y) instead of pdata(yx) p d a t a ( y x) ), therefore the conditional cross-entropy is not a random variable, but a number. This is considered a high entropy, a high level of disorder ( meaning low level of purity). If you find in this approach any inaccuracies or a better explanation I'll be happy to read about it. The joint entropy measures how much uncertainty there is in the two random variables X and Y taken together. the expected surprisal) of a coin flip, measured in bits, graphed versus the bias of the coin Pr(X 1), where X 1 represents a result of heads. 'Dits' can be converted into Shannon's bits, to get the formulas for conditional entropy, etc. V-Measure: A Conditional Entropy-Based External Cluster Evaluation Measure. Multivariate Soft Rank via Entropy-Regularized Optimal Transport: Sample. Cite (ACL):: Andrew Rosenberg and Julia Hirschberg. It reveals how much variability remains in y, when x is fixed. Information is quantified as 'dits' (distinctions), a measure on partitions. A Parameter-Free Conditional Gradient Method for Composite Minimization under. The Information Gain, a well-known technique in many domains (maybe most famous as a splitting criterion for decision trees) is an direct application of Conditional Entropy.

I'm trying to understand the relationship between maximum likelihood estimation for a function of the type $p(y^(y|x)$), therefore the conditional cross-entropy is not a random variable, but a number. The conditional entropy is the entropy of the conditional probability. The Conditional Entropy is a very useful concept.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed